Also,I am sure there must be tons of other approaches with which you can perform the said task.Do share them in comment section if you have came across any.įind GitHub repo HERE ! # modules for generating the word cloud from os import path, getcwd from PIL import Image import numpy as np import matplotlib.pyplot as plt from wordcloud import WordCloud %matplotlib inline d = getcwd() text = open('nlp.txt','r').read() #Image link = ' ' mask = np.array(Image.open(path.join(d, "batman.jpg"))) wc = WordCloud(background_color="black",max_words=3000,mask=mask,\ max_font_size=30,min_font_size=0.00000001,\ random_state=42,) wc.generate(text) plt.figure(figsize=) plt.imshow(wc, interpolation="bilinear") plt.axis("off") plt.savefig('bat_wordcloud.jpg',bbox_inches='tight',pad_inches=0. I hope you find this tutorial fruitful and worth reading. sort_values()” to arrange keywords in order. Step 6 : Save results in a DataFrame and use “. Step 5 : Apply concept of TF-IDF for calculating weights of each keyword. Step 4: Save list of extracted keywords in a DataFrame. findall()” function of regular expressions to extract keywords. Step 2: Convert PDF file to txt format and read data. Down below is the jupyter notebook with all three approaches.Take a look! Jupyter Notebook :Īll necessary remarks are denoted with ‘#’. Note : I have attempted three approaches for this task.Above libraries would be suffice for approach 1.However I have just touched upon two other approaches which I found online.Treat them as alternatives. textract (To convert non-trivial, scanned PDF files into text readable by Python).PyPDF2 (To convert simple, text-based PDF files into text readable by Python).

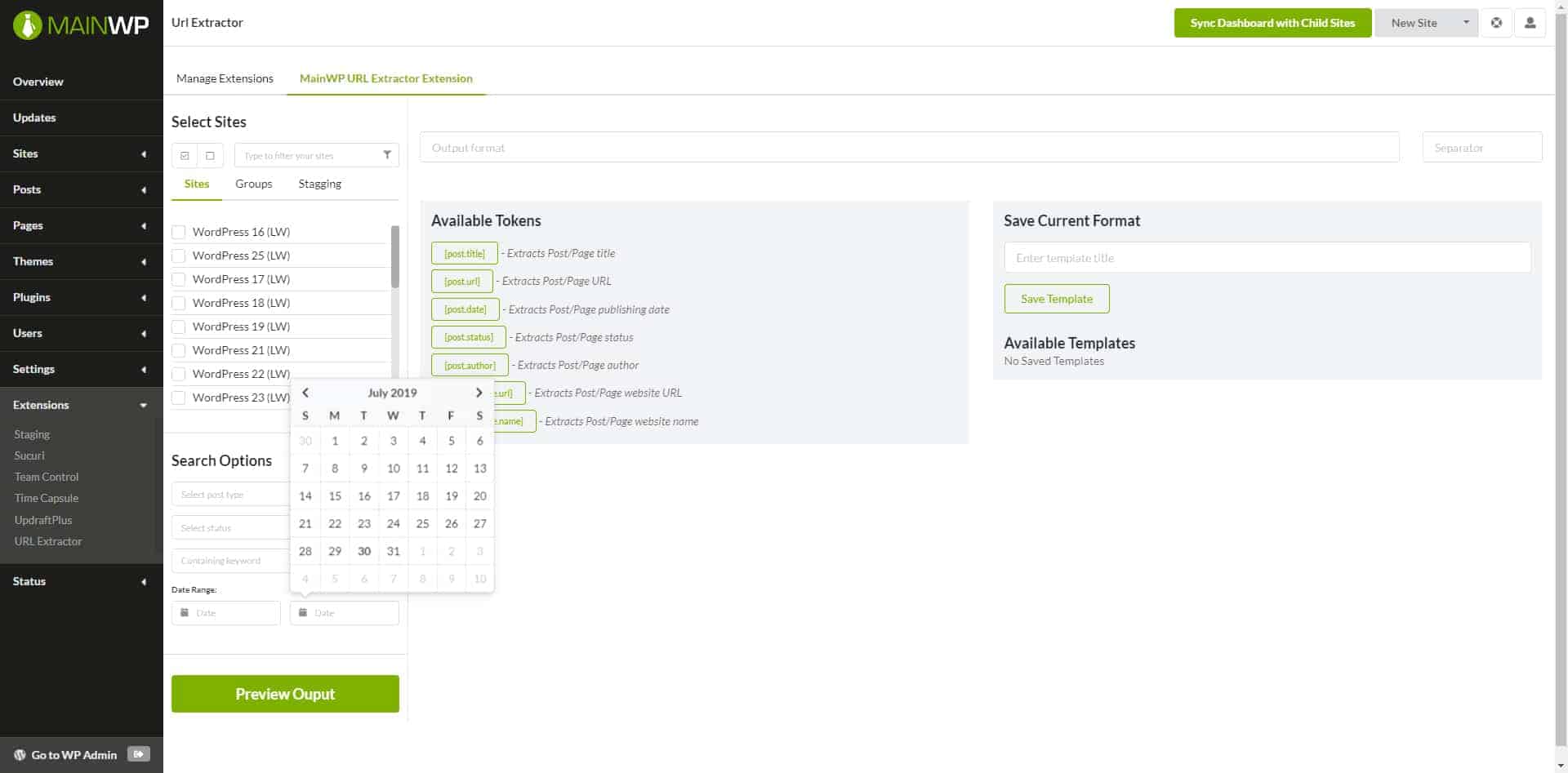

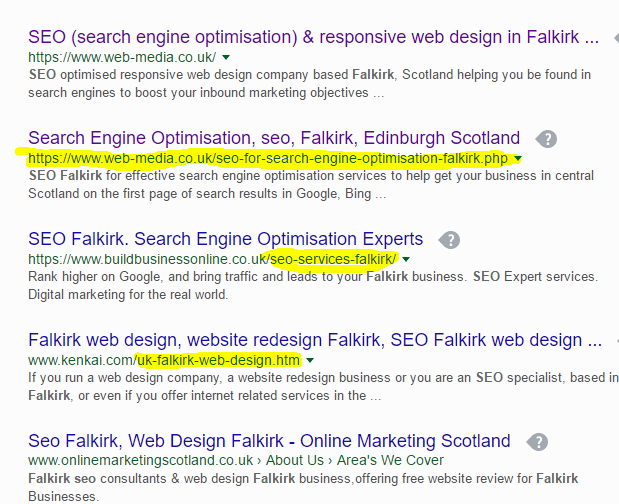

You will need below mentioned libraries installed on your machine for the task.In case you don’t have it,I have inserted codes for each dependency in code block below, which you can type it on command prompt for windows or on terminal for mac operating system. (I have used Python 2.7.15 version for this tutorial.) Let’s get down to the nitty-gritty of the topic.Up next follows a tutorial on how you can parse through a PDF file and convert it in to a list of keywords.We will learn and cement our understanding by taking a hands-on problem, so code along! Problem Statement - Given a particular PDF/Text document ,How to extract keywords and arrange in order of their weightage using Python? Dependencies : Website makers benefit from keywords because they can group similar content by their topics.Īlgorithm programmers benefit from keywords because they reduce the dimensionality of text to the most important features. Readers benefit from keywords because they can judge more quickly whether the given text is worth reading or not. Why extract keywords?Įxtracting keywords is one of the most important tasks while working with text data in the domain of Text Mining, Information Retrieval and Natural Language Processing. Although they may sound distinct,but they all serve the same purpose: characterization of the topic discussed in a document. “Key phrases”, “ key terms”, “ key segments” or just “ keywords” are the different nomenclatures often used for defining the terms that represent the most relevant information contained in the document. Keyword extraction is nothing but the task of identification of terms that best describe the subject of a document. In the future, we may add other types of boundaries to choose from.How to Extract Keywords from PDFs and arrange in order of their weights using Python Each word is then compared with each keyword. The text is split into words by word boundaries. One of the most common strategies for search engine optimization is structuring website content so that the most important keyword phrases of. For browser, you can use many additional features.Browser is more expensive but allows JavaScript rendering and waiting. You can choose to scrape with or without browser. SEO Agility Tools meta tag extractor is a free tool to extract meta tags from web pages as quickly as possible.You can pick case sensitive search and search through scripts.So if you want just the start URLs, set maxDepth to 0, etc. You can specify maxDepth and maxPagesPerCrawl to limit the scope of the scrape.Check our scraping tutorial on how to use these. You can combine Start URLs, Pseudo Urls and link selector to traverse any number of pages accross websites.You can pass in any number of keywords that you want to count.

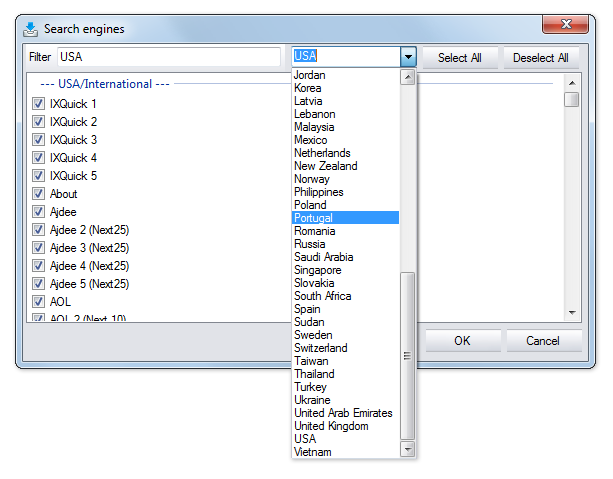

Can deeply crawl a website and counts how many times are provided keywords found on the page.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed